How GetAI scores models without earning your distrust.

Every choice below is documented in the SDD (Project_GetAI_SDD_v1.0.md) and locked by a numbered decision (D1–D24). Changes require a Spectra change proposal, not a Notion edit.

The eight core axes

Every trial is scored along the same eight dimensions. Track-specific axes (efficiency, recovery, refusal appropriateness, tool-use efficacy, plan coherence, locale fidelity) attach when a pack opts into a given track.

8-axis profile · live

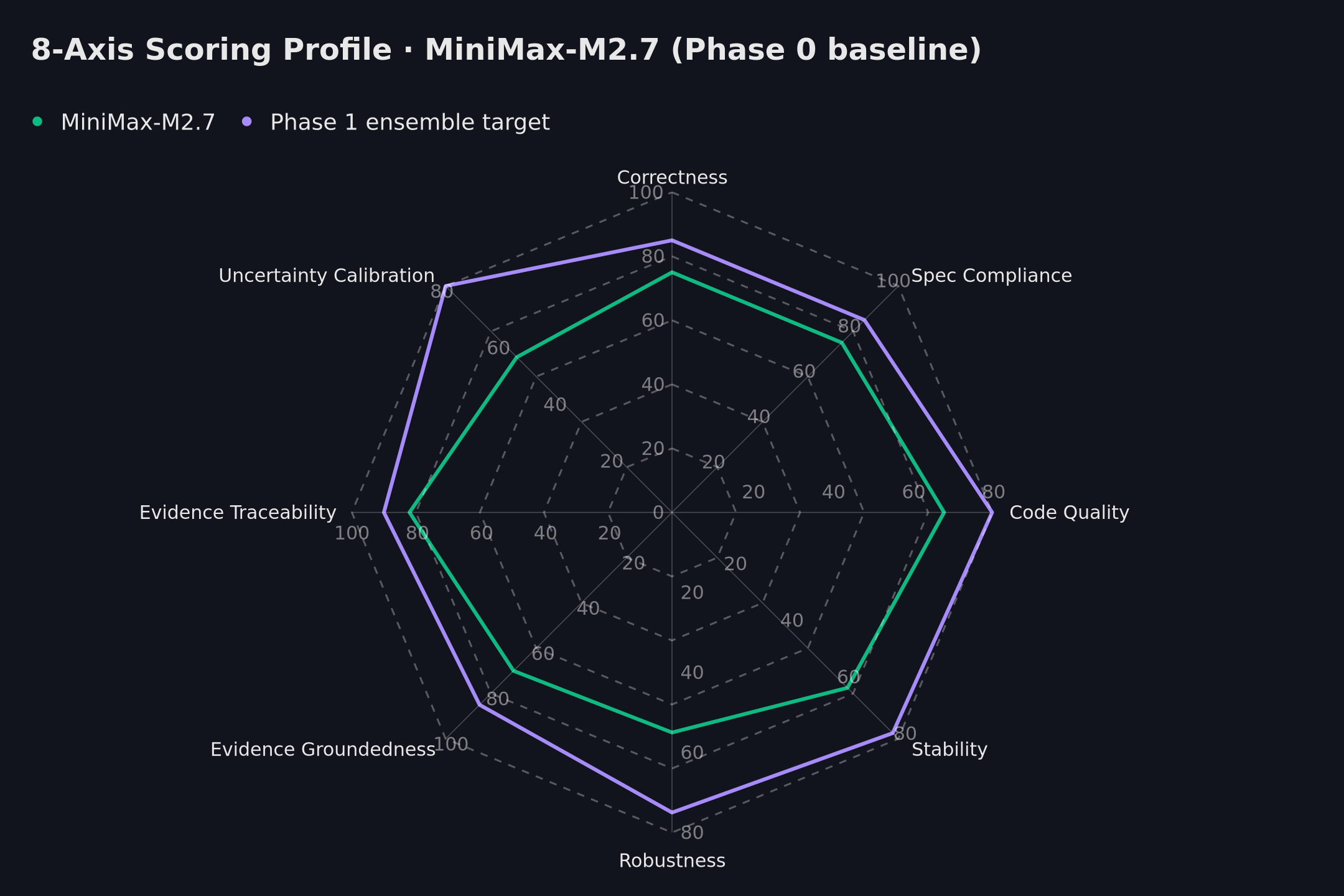

The radar below shows the current Phase 0 baseline (MiniMax-M2.7, single-provider) against the Phase 1 target envelope under the D8 three-judge ensemble. Both shapes are computed from the same axis weights — no normalisation tricks.

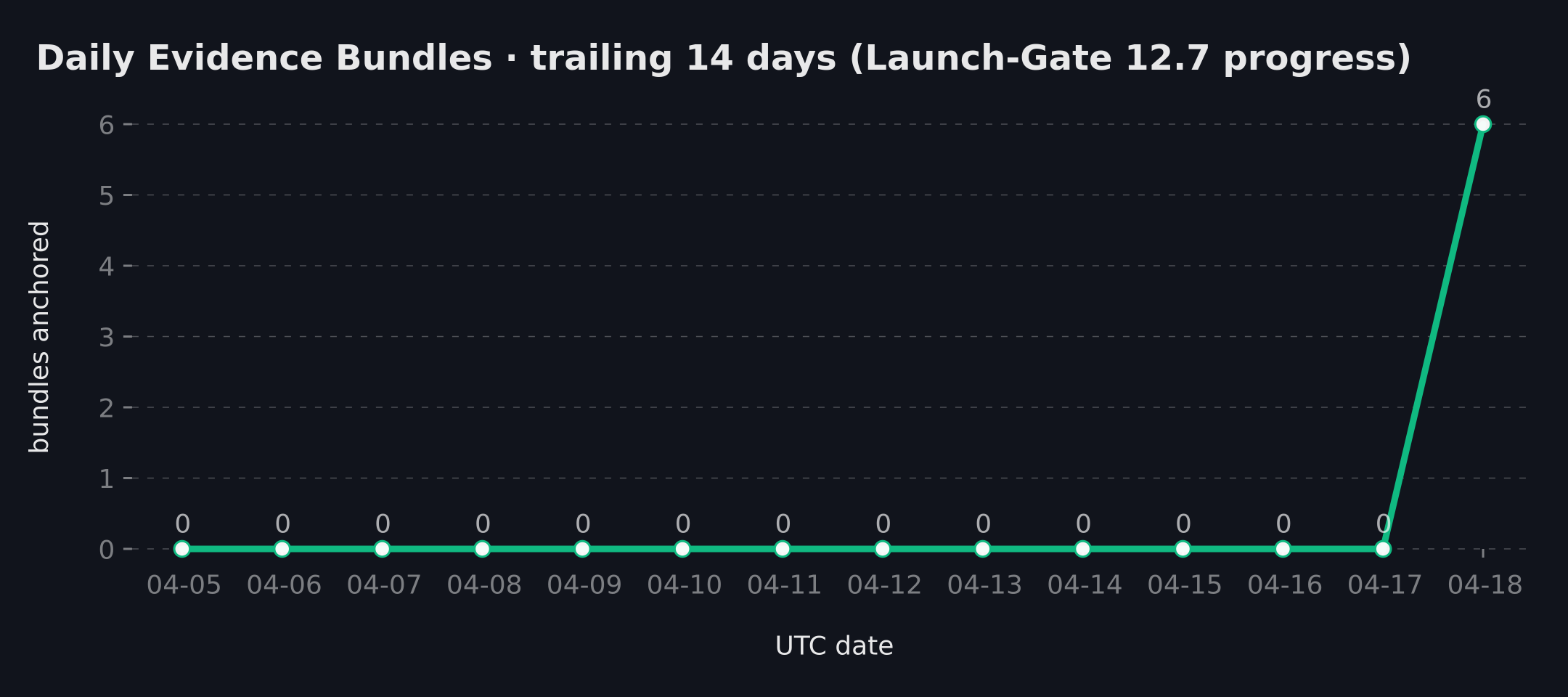

Daily anchor activity · 14-day window

Launch-Gate 12.7 demands 14 consecutive days of published Merkle roots before public ranking opens. Today is day 1 of the streak.

Judge ensemble (D8)

Phase 1 enforces n ≥ 3 heterogeneous closed-model judges per scored axis. Heterogeneity span ≥ 2 distinct vendor families. Inter-rater agreement is measured continuously via Krippendorff α.

- Bi-weekly refresh of n ≥ 100 human-calibration seeds

- Degraded-mode auto-trip: any judge >10% error for 2h → 2-judge mode + provisional flag, 48h backfill SLA

- Phase 0 today: single-judge / degraded-mode / provisional. Not eligible for public ranking.

Drift detection

Per-axis drift is monitored with a four-stack:

| Stack | Purpose | Tunable |

|---|---|---|

| MAD-z | Outlier flag | z > 3.5 |

| CUSUM | Sustained shift | k = 0.5σ, h = 5σ |

| Page-Hinkley | Change-point | λ = 50 |

| Mann-Whitney U + BH | Distribution test + FDR control | monthly FP < 0.5% |

Silent update probe (D5)

Vendors swap models without telling you. GetAI catches it via 2-of-3 signal fusion:

- S1 — header hash: SHA-256 over canonicalised response headers (CDN noise stripped).

- S2 — fingerprint cosine: embeddings of model self-identification responses; threshold 0.08.

- S3 — vendor notes scraper: changelog + release notes parsing.

Two of three must trigger to raise an incident. Single-signal trips are queued for review but never auto-published.

Evidence chain

Each trial produces a content-addressable Evidence Bundle:

- manifest.json — canonical orjson, sorted keys, naive UTC

- {inputs,outputs,tool_events,judge_verdicts,scores}.ndjson

- SIGNATURES.json — SHA-256 of manifest, optional vendor sigs

- merkle_proof.json — leaf hash + sibling path + root

- attribution.json — phase, judge_mode, comparability marker

Non-goals (Codex-pruned)

- Carbon estimation, public hash-chain ledger, DOI/academic tier

- Live replay UI, DAO marketplace, long-context-only track

- Auto-routing bandit, three-region deployment, full SOC 2 in v1

- Legal / medical content generation

- A single universal "AI score" — every score has a context

"Don't make a feature-richer aistupidlevel. Make the AI regression system that survives a procurement review."